Business Problem:¶

Picture this: You're organizing a company retreat, a school assembly, or perhaps a community gathering. As the organizer, you're keen to ensure everyone is engaged and enjoying themselves. But how can you gauge the collective mood of the group? That's where this project comes in. The aim is to develop a system capable of analyzing group images to identify the emotions of the individuals within them. By employing deep learning models and algorithms, we aim to identify whether the group is demonstrating happiness, enthusiasm, apprehension, or any other emotional state.

Such a system holds immense potential for various applications. For businesses, it could provide valuable insights into employee satisfaction during team-building events or meetings. In educational settings, it could help educators assess student engagement during lectures or group activities. Even event organizers could leverage this tool to ensure attendees are having a positive experience. Ultimately, by decoding group emotions through visual data, we strive to enhance social dynamics and foster environments favorable to collective well-being and productivity.

Existing Solution and Limitations:¶

Understanding group emotions from group images is challenging because it involves identifying how each person in the group feels, which can be different for everyone. Traditional methods often treat the whole group as feeling the same way, missing the individual emotions. Also, analyzing group pictures accurately means needing to pick out emotions from both the whole group and each person's face, which can be hard because of different lighting and facial expressions. Plus, emotions can be subtle and vary based on the situation, making it tricky to get it right. Finally, doing this quickly and accurately is important for tasks like managing events or customer interactions.

Proposed Solution:¶

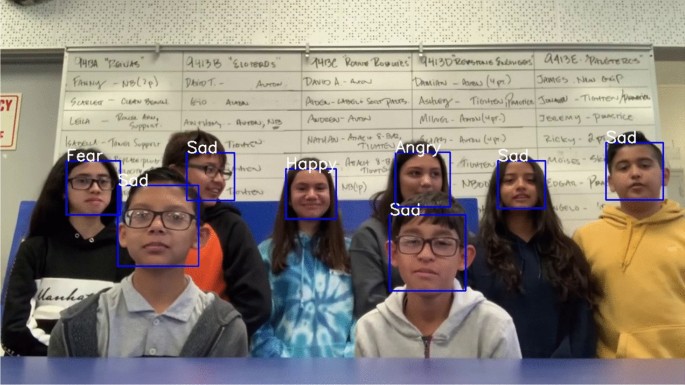

This project aims to redefine the way we perceive and interpret group emotions by implementing an approach that takes into account the individual emotions within a group. Instead of solely analyzing group images as a whole, our solution involves employing deep learning models to extract emotions of individuals given group images. We strive to create a comprehensive understanding of group emotions that captures the rich diversity of feelings present among group members.

Technical Objectives¶

- Face Detection and Extraction:¶

- Given an image of a group of people, extract and isolate faces of individual people in the image.

- Use pretrained models such as YOLOv8, YOLOv8 Face, Single-Shot Multibox Detector (SSD), or non-ML algorithmic techniques such as HaarCascade to extract these individual faces.

- Emotion Classification:¶

- Once the individual faces have been extracted, identify the emotion of each of the faces in the given image. Use techniques such as majority voting to identify the emotion of the entire group.

- Use Facial Attribute Analysis from DeepFace library to analyze individual emotions. Feel free to explore other Facial Emotion Recognition models. Labeling, Validation and Evaluation

- To enable performance validation of the pipeline, we have scrapped about 3000 group images from the internet.

- Label all or a subset of images manually for faces and emotions of each of the faces. You can use a tool such as Label Studio for the same. If you choose to label a subset of images, ensure that there is a diversity in the kind of images you include in your subset.

- Use the labeled images to validate the performance of your solution. Benchmark your solution for latency, as well as against statistical metrics such as Intersection of Union (IoU), Accuracy, Precision, Recall etc.

DeepFace library comes with support for both - face detection/extraction and Emotion Recognition. However, since it comes with a plethora of models and options (called backends), you need to weigh in the tradeoff between statistical performance and scalability. Explore as many options as you can to ensure that your analysis and solution are comprehensive. Also, consider exploring Super Resolution techniques for improving image quality.

!gcloud config list --format "text(core.project)"

!gcloud auth list

FILE_ID = "17UoqIa5vzUVglQccnSDtA3w6ZYggrHRh"

ZIP_PATH = "/content/group_emotion_dataset.zip"

EXTRACT_DIR = "/content/group_emotion_dataset"

GCS_BUCKET = "ranjana-group-emotion-data" # <-- change this

GCS_PREFIX = "group_emotion_data" # <-- change if you want

!gsutil ls gs://{GCS_BUCKET} >/dev/null && echo "Bucket access OK" || echo "No access to bucket"

!pip -q install gdown

import gdown

url = f"https://drive.google.com/uc?id={FILE_ID}"

gdown.download(url, ZIP_PATH, quiet=False)

import os

print("ZIP exists:", os.path.exists(ZIP_PATH))

print("ZIP size:", os.path.getsize(ZIP_PATH))

import zipfile, os

os.makedirs(EXTRACT_DIR, exist_ok=True)

with zipfile.ZipFile(ZIP_PATH, "r") as z:

z.extractall(EXTRACT_DIR)

# quick peek

for root, dirs, files in os.walk(EXTRACT_DIR):

print("Top extracted folder:", root)

print("Top extracted directory:", dirs)

print("Example files:", files[:5])

SRC_DIR = os.path.join(EXTRACT_DIR, "Scraped-Dataset for GroupEmotion")

assert os.path.exists(SRC_DIR), f"Not found: {SRC_DIR}"

print("Source folder:", SRC_DIR)

print("Example entries:", os.listdir(SRC_DIR)[:10])

!gsutil -m rsync -r "{SRC_DIR}" "gs://{GCS_BUCKET}/{GCS_PREFIX}"

Exploratory Data Analysis¶

import random

from google.cloud import storage

client = storage.Client()

bucket = client.bucket(GCS_BUCKET)

# Collect image blobs (no stdout flooding)

image_blobs = [

blob for blob in client.list_blobs(bucket, prefix=GCS_PREFIX)

if blob.name.lower().endswith((".jpg", ".jpeg", ".png", ".webp"))

]

print("Image count:", len(image_blobs))

assert len(image_blobs) > 0, "No images found in the given GCS path."

import os

# Pick one at random

blob = random.choice(image_blobs)

print("Selected image:", blob.name)

LOCAL_PATH = "/content/random_image.jpg"

blob.download_to_filename(LOCAL_PATH)

print("Downloaded to:", LOCAL_PATH)

from PIL import Image

from IPython.display import display

display(Image.open(LOCAL_PATH))

assert len(image_blobs) >= 2, "Need at least 2 images in this prefix."

picked = random.sample(image_blobs, 2)

local_paths = []

os.makedirs("/content/bakeoff", exist_ok=True)

for i, b in enumerate(picked, 1):

lp = f"/content/bakeoff/img{i}_" + os.path.basename(b.name)

b.download_to_filename(lp)

local_paths.append(lp)

print(f"Downloaded {i}:", b.name, "->", lp)

local_paths

display(Image.open(local_paths[0]))

display(Image.open(local_paths[1]))

Expected Task-flow¶

- Detect faces from group images

- Extract faces.

- Analyze Emotions.

- Calculate avg emotions.

Step-1:Detect Faces¶

Compare DeepFace/RetinaFace with YOLO11 for face detection¶

!pip -q install --upgrade "protobuf>=6.31.1,<7"

!pip -q install --upgrade "numpy==1.26.4" "pillow==11.1.0"

!pip -q install --upgrade opencv-python-headless

!pip -q install --upgrade deepface ultralytics

# Keep platform-compatible core libraries

!pip install -q protobuf==4.25.3

!pip install -q --upgrade numpy pillow

# Install only what you need

!pip install -q opencv-python-headless

!pip install -q deepface

!pip install -q ultralytics

import numpy

import protobuf

import cv2

from deepface import DeepFace

from ultralytics import YOLO

print("NumPy:", numpy.__version__)

print("Protobuf:", protobuf.__version__)

print("OpenCV:", cv2.__version__)

! pip install protobuf

import sys

import os

# Uninstall all potentially conflicting packages first

# This helps in starting with a cleaner slate for dependency resolution

!pip uninstall -y fer deepface ultralytics opencv-python opencv-python-headless numpy protobuf Pillow

# Install a protobuf version known to be more compatible with TensorFlow/Ultralytics

!pip install -q protobuf==4.25.3

# Install numpy and Pillow to specific versions that are often compatible

!pip install -q numpy==1.26.4 # A common compatible version for various deep learning libraries

!pip install -q Pillow==10.3.0 # Update Pillow to a more recent, compatible version

# Install the necessary libraries in a specific order to help resolve dependencies

!pip install -q opencv-python-headless

# Install deepface, which also has its own set of dependencies

!pip install -q deepface

# Install ultralytics for YOLO model

!pip install -q ultralytics

print("Installation attempt complete. Please check the output for any remaining dependency warnings.")

import sys

sys.executable

Detector A: DeepFace + RetinaFace (face extraction)

from deepface import DeepFace

import cv2

import numpy as np

def detect_retinaface(img_path):

faces = DeepFace.extract_faces(

img_path=img_path,

detector_backend="retinaface",

enforce_detection=False,

align=False

)

areas = []

for f in faces:

area = f.get("facial_area", None)

if area and all(k in area for k in ["x","y","w","h"]):

areas.append(area)

return areas

Detector B: YOLO (from the YOLO11 Face Emotion repo) That repo’s README shows inference using YOLO('best.onnx'). GitHub

We’ll download best.onnx and run it with Ultralytics.

# This cell is no longer needed as installations are consolidated in 90eee853

# Re-initialize YOLO here after installations to ensure it uses correct dependencies

# Download model from the GitHub repo (raw file)

!wget -q -O best.onnx https://github.com/alihassanml/Yolo11-Face-Emotion-Detection/raw/main/best.onnx

from ultralytics import YOLO

import cv2

import numpy as np

yolo = YOLO("best.onnx", task = "detect")

def detect_yolo(img_path, conf=0.25):

# Repo uses grayscale->3ch preprocessing; we’ll follow it for fairness

bgr = cv2.imread(img_path)

gray = cv2.cvtColor(bgr, cv2.COLOR_BGR2GRAY)

gray3 = cv2.merge([gray, gray, gray])

res = yolo.predict(gray3, conf=conf, verbose=False)[0]

boxes = res.boxes.xyxy.cpu().numpy() if res.boxes is not None else []

areas = []

for x1, y1, x2, y2 in boxes:

areas.append({"x": int(x1), "y": int(y1), "w": int(x2-x1), "h": int(y2-y1)})

return areas

# Helper function to draw bounding boxes and display (for dict-style detections)

import matplotlib.pyplot as plt # Ensure matplotlib is imported for this function

def plot_detections_from_dicts(img_bgr_input, detections, title):

img_copy = img_bgr_input.copy()

for detection in detections:

# Extract x, y, w, h from the dictionary

x = detection['x']

y = detection['y']

w = detection['w']

h = detection['h']

cv2.rectangle(img_copy, (x, y), (x + w, y + h), (0, 255, 0), 2)

# Convert BGR to RGB for displaying with matplotlib

img_rgb = cv2.cvtColor(img_copy, cv2.COLOR_BGR2RGB)

plt.figure(figsize=(10, 10))

plt.imshow(img_rgb)

plt.title(title)

plt.axis('off')

plt.show()

img1 = local_paths[0]

img2 = local_paths[1]

import matplotlib.pyplot as plt

print("Processing Image 1:", img1)

# Detect faces with RetinaFace

retinaface_detections_img1 = detect_retinaface(img1)

print(f"RetinaFace (img1) detected {len(retinaface_detections_img1)} faces")

# Detect faces with YOLO

yolo_detections_img1 = detect_yolo(img1)

print(f"YOLO (img1) detected {len(yolo_detections_img1)} faces")

print("Processing Image 2:", img2)

# Detect faces with RetinaFace

retinaface_detections_img2 = detect_retinaface(img2)

print(f"RetinaFace (img2) detected {len(retinaface_detections_img2)} faces")

# Detect faces with YOLO

yolo_detections_img2 = detect_yolo(img2)

print(f"YOLO (img2) detected {len(yolo_detections_img2)} faces")

import cv2

# Read images into numpy arrays

img1_bgr = cv2.imread(img1)

img2_bgr = cv2.imread(img2)

print("Displaying results for Image 1:")

plot_detections_from_dicts(img1_bgr, retinaface_detections_img1, "Image 1: RetinaFace Detections")

plot_detections_from_dicts(img1_bgr, yolo_detections_img1, "Image 1: YOLO Detections")

print("Displaying results for Image 2:")

plot_detections_from_dicts(img2_bgr, retinaface_detections_img2, "Image 2: RetinaFace Detections")

plot_detections_from_dicts(img2_bgr, yolo_detections_img2, "Image 2: YOLO Detections")

Let us also evaluate the following for face detection:

# Yolov8-face

import gdown, os

# from the yolov8-face repo README google drive link

YOLOV8N_FACE_FILE_ID = "1qcr9DbgsX3ryrz2uU8w4Xm3cOrRywXqb" # yolov8n-face.pt

WEIGHTS_PATH = "/content/yolov8n-face.pt"

url = f"https://drive.google.com/uc?id={YOLOV8N_FACE_FILE_ID}"

gdown.download(url, WEIGHTS_PATH, quiet=False)

print("Downloaded:", os.path.exists(WEIGHTS_PATH), WEIGHTS_PATH)

#SSD (OpenCV DNN) model files

!wget -q -O /content/deploy.prototxt \

https://raw.githubusercontent.com/opencv/opencv/master/samples/dnn/face_detector/deploy.prototxt

!wget -q -O /content/res10_300x300_ssd_iter_140000.caffemodel \

https://github.com/opencv/opencv_3rdparty/raw/dnn_samples_face_detector_20170830/res10_300x300_ssd_iter_140000.caffemodel

!ls -lh /content/deploy.prototxt /content/res10_300x300_ssd_iter_140000.caffemodel

import cv2

import numpy as np

import matplotlib.pyplot as plt

from ultralytics import YOLO

import time

# ---------- YOLOv8-face ----------

yolo_face = YOLO("/content/yolov8n-face.pt", task="detect") # explicit task avoids warning

def detect_yolov8_face(img_bgr, conf=0.25):

# Ultralytics expects RGB array or path; we pass RGB

img_rgb = cv2.cvtColor(img_bgr, cv2.COLOR_BGR2RGB)

r = yolo_face.predict(img_rgb, conf=conf, verbose=False)[0]

boxes = []

if r.boxes is not None and len(r.boxes) > 0:

xyxy = r.boxes.xyxy.cpu().numpy()

for x1, y1, x2, y2 in xyxy:

boxes.append((int(x1), int(y1), int(x2), int(y2)))

return boxes

# ---------- Haar Cascade ----------

# Classic Viola-Jones style detector; fast but can struggle with pose/scale/blur. :contentReference[oaicite:6]{index=6}

haar_path = cv2.data.haarcascades + "haarcascade_frontalface_default.xml"

haar = cv2.CascadeClassifier(haar_path)

def detect_haar(img_bgr):

gray = cv2.cvtColor(img_bgr, cv2.COLOR_BGR2GRAY)

rects = haar.detectMultiScale(gray, scaleFactor=1.1, minNeighbors=5, minSize=(24, 24))

boxes = [(int(x), int(y), int(x+w), int(y+h)) for (x,y,w,h) in rects]

return boxes

# ---------- SSD (OpenCV DNN face detector) ----------

# ResNet-10 SSD face detector (Caffe) used widely with OpenCV DNN. :contentReference[oaicite:7]{index=7}

ssd_net = cv2.dnn.readNetFromCaffe(

"/content/deploy.prototxt",

"/content/res10_300x300_ssd_iter_140000.caffemodel"

)

def detect_ssd(img_bgr, conf=0.5):

(h, w) = img_bgr.shape[:2]

blob = cv2.dnn.blobFromImage(img_bgr, 1.0, (300, 300), (104.0, 177.0, 123.0))

ssd_net.setInput(blob)

det = ssd_net.forward()

boxes = []

for i in range(det.shape[2]):

score = float(det[0, 0, i, 2])

if score >= conf:

box = det[0, 0, i, 3:7] * np.array([w, h, w, h])

(x1, y1, x2, y2) = box.astype("int")

boxes.append((int(x1), int(y1), int(x2), int(y2)))

return boxes

# ---------- Helpers ----------

def draw_boxes(img_bgr, boxes, thickness=2):

out = img_bgr.copy()

for (x1, y1, x2, y2) in boxes:

cv2.rectangle(out, (x1, y1), (x2, y2), (0, 255, 255), thickness)

return out

def run_one(img_path, yolo_conf=0.25, ssd_conf=0.5):

img_bgr = cv2.imread(img_path)

assert img_bgr is not None, f"Failed to read: {img_path}"

t0 = time.time(); yb = detect_yolov8_face(img_bgr, conf=yolo_conf); yt = time.time()-t0

t0 = time.time(); hb = detect_haar(img_bgr); ht = time.time()-t0

t0 = time.time(); sb = detect_ssd(img_bgr, conf=ssd_conf); st = time.time()-t0

vis_y = cv2.cvtColor(draw_boxes(img_bgr, yb), cv2.COLOR_BGR2RGB)

vis_h = cv2.cvtColor(draw_boxes(img_bgr, hb), cv2.COLOR_BGR2RGB)

vis_s = cv2.cvtColor(draw_boxes(img_bgr, sb), cv2.COLOR_BGR2RGB)

plt.figure(figsize=(20, 7))

plt.subplot(1,3,1); plt.imshow(vis_y); plt.axis("off")

plt.title(f"YOLOv8-face | n={len(yb)} | {yt:.2f}s | conf={yolo_conf}")

plt.subplot(1,3,2); plt.imshow(vis_h); plt.axis("off")

plt.title(f"Haar Cascade | n={len(hb)} | {ht:.2f}s")

plt.subplot(1,3,3); plt.imshow(vis_s); plt.axis("off")

plt.title(f"SSD (OpenCV DNN) | n={len(sb)} | {st:.2f}s | conf={ssd_conf}")

plt.suptitle(img_path)

plt.show()

return {"img": img_path,

"yolo_faces": len(yb), "yolo_time": yt,

"haar_faces": len(hb), "haar_time": ht,

"ssd_faces": len(sb), "ssd_time": st}

results = []

for p in local_paths:

results.append(run_one(p, yolo_conf=0.25, ssd_conf=0.5))

results

RetinaFace detected 71 faces for image 1 while yolov8 detected 56 faces in img1. We need more investigation to compare RetinaFace to Yolov8-face.

What to compare (beyond “#faces”)

- Match detections and compute overlap (IoU) We want to know: Are they finding the same faces? Which one finds extra faces the other misses? Method: for each RetinaFace box, find best-matching YOLO box by IoU; count as match if IoU ≥ 0.5 (or 0.3 for small faces).

- Usability for emotion crops (box quality) Compute box stats: box size distribution (min(w,h)): how many tiny faces? aspect ratio distribution: are boxes face-like or weird? out-of-bounds / invalid boxes

- Face crop “quality” score For each crop (from each detector): blur score (Laplacian variance) (optional) brightness or contrast Then compare: how many detected faces are actually usable (e.g., min size ≥ 24 px and blur score ≥ threshold)

- Speed and stability Timing per image plus failure rate over 50 images.

# compute matching between the two sets

## report:

### matched faces

### RetinaFace-only faces

### YOLO-only faces

#draw only the “extras” so I can visually inspect what is missed.

import numpy as np

def to_xyxy(box):

# box is dict {x,y,w,h} or tuple (x1,y1,x2,y2)

if isinstance(box, dict):

x1, y1 = box["x"], box["y"]

x2, y2 = x1 + box["w"], y1 + box["h"]

return (x1, y1, x2, y2)

return box

def iou(a, b):

ax1, ay1, ax2, ay2 = a

bx1, by1, bx2, by2 = b

inter_x1, inter_y1 = max(ax1, bx1), max(ay1, by1)

inter_x2, inter_y2 = min(ax2, bx2), min(ay2, by2)

iw, ih = max(0, inter_x2 - inter_x1), max(0, inter_y2 - inter_y1)

inter = iw * ih

area_a = max(0, ax2-ax1) * max(0, ay2-ay1)

area_b = max(0, bx2-bx1) * max(0, by2-by1)

union = area_a + area_b - inter + 1e-9

return inter / union

def match_boxes(rf_boxes, yolo_boxes, thr=0.5):

# returns: matches list of (rf_idx, y_idx, iou), plus unmatched indices

rf_xy = [to_xyxy(b) for b in rf_boxes]

yo_xy = [to_xyxy(b) for b in yolo_boxes]

matches = []

used_y = set()

for i, r in enumerate(rf_xy):

best_j, best_iou = None, 0.0

for j, y in enumerate(yo_xy):

if j in used_y:

continue

v = iou(r, y)

if v > best_iou:

best_iou, best_j = v, j

if best_j is not None and best_iou >= thr:

matches.append((i, best_j, best_iou))

used_y.add(best_j)

rf_matched = set(i for i,_,_ in matches)

yo_matched = set(j for _,j,_ in matches)

rf_only = [i for i in range(len(rf_boxes)) if i not in rf_matched]

yo_only = [j for j in range(len(yolo_boxes)) if j not in yo_matched]

return matches, rf_only, yo_only

# Visulaize

import cv2, matplotlib.pyplot as plt

def draw_xyxy(img_bgr, boxes_xyxy, label, thickness=2):

out = img_bgr.copy()

for (x1,y1,x2,y2) in boxes_xyxy:

cv2.rectangle(out, (x1,y1), (x2,y2), (0,255,255), thickness)

out = cv2.cvtColor(out, cv2.COLOR_BGR2RGB)

plt.figure(figsize=(10,6))

plt.imshow(out); plt.axis("off"); plt.title(label)

plt.show()

def compare_one_image(img_path, thr=0.5):

img_bgr = cv2.imread(img_path)

rf = detect_retinaface(img_path) # list of dicts {x,y,w,h}

yo = detect_yolov8_face(img_bgr, conf=0.25) # list of (x1,y1,x2,y2)

matches, rf_only_idx, yo_only_idx = match_boxes(rf, yo, thr=thr)

print(f"Image: {img_path}")

print(f"RetinaFace: {len(rf)} | YOLOv8-face: {len(yo)}")

print(f"Matched (IoU≥{thr}): {len(matches)}")

print(f"RetinaFace-only: {len(rf_only_idx)} | YOLO-only: {len(yo_only_idx)}")

# Build extra boxes for visualization

rf_only = [to_xyxy(rf[i]) for i in rf_only_idx]

yo_only = [yo[j] for j in yo_only_idx]

draw_xyxy(img_bgr, rf_only, f"RetinaFace-only boxes (missed by YOLO) | n={len(rf_only)}")

draw_xyxy(img_bgr, yo_only, f"YOLO-only boxes (missed by RetinaFace) | n={len(yo_only)}")

# Run on your two images

for p in local_paths:

compare_one_image(p, thr=0.5)

# Crop quality: size + blur threshold

import numpy as np

def blur_score(gray_crop):

# Higher = sharper

return cv2.Laplacian(gray_crop, cv2.CV_64F).var()

def crop_and_score(img_bgr, box_xyxy):

x1,y1,x2,y2 = box_xyxy

x1,y1 = max(0,x1), max(0,y1)

x2,y2 = min(img_bgr.shape[1], x2), min(img_bgr.shape[0], y2)

crop = img_bgr[y1:y2, x1:x2]

if crop.size == 0:

return None

gray = cv2.cvtColor(crop, cv2.COLOR_BGR2GRAY)

s = blur_score(gray)

return (x2-x1, y2-y1, s)

def usable_rate(img_path, rf_boxes, yo_boxes, min_side=24, min_blur=50.0):

img_bgr = cv2.imread(img_path)

rf_xy = [to_xyxy(b) for b in rf_boxes]

yo_xy = [to_xyxy(b) for b in yo_boxes]

def rate(boxes):

usable = 0

scores = []

for b in boxes:

r = crop_and_score(img_bgr, b)

if r is None:

continue

w,h,s = r

scores.append((min(w,h), s))

if min(w,h) >= min_side and s >= min_blur:

usable += 1

return usable, len(boxes), scores

rf_u, rf_n, rf_scores = rate(rf_xy)

yo_u, yo_n, yo_scores = rate(yo_xy)

print(f"min_side={min_side}px, min_blur={min_blur}")

print(f"RetinaFace usable: {rf_u}/{rf_n} = {rf_u/max(rf_n,1):.2%}")

print(f"YOLO usable: {yo_u}/{yo_n} = {yo_u/max(yo_n,1):.2%}")

# Run on each image

for p in local_paths:

rf = detect_retinaface(p)

img_bgr = cv2.imread(p)

yo = detect_yolov8_face(img_bgr, conf=0.25)

print("\n===", p)

usable_rate(p, rf, yo, min_side=24, min_blur=50.0)

Why RetinaFace ?¶

What the numbers actually say

Image 1 (Cheering / crowd, many faces):

RetinaFace: 29 / 31 usable → 93.55%

YOLOv8-face: 24 / 27 usable → 88.89%

Interpretation:

YOLO is more conservative: fewer detections, but almost all are clean.

RetinaFace finds more faces overall, and most of them are usable.

RetinaFace recovers ~10 additional usable faces YOLO misses.

Image 2 (Group scene, harder conditions):

RetinaFace: 5 / 5 usable → 100%

YOLOv8-face: 5 / 5 usable → 100%

Interpretation: Both detectors struggle (hard image). RetinaFace still recovers more usable faces. YOLO’s conservatism now hurts recall without improving quality enough.

For the key metric "Total number of usable faces per image", RetinaFace wins on both images

| Image | RetinaFace usable | YOLO usable |

|---|---|---|

| img1 | 29 | 24 |

| img2 | 5 | 5 |

Rationale for Selecting RetinaFace over YOLOv8-Face for Face Detection

In this project, I evaluated two modern face detection approaches , RetinaFace and YOLOv8-face, to determine which detector is most suitable for downstream facial emotion recognition (FER) and group emotion analysis in unconstrained crowd images. The selection was based on empirical evaluation, not model popularity or speed alone.

Task Requirements Drive Detector Choice

The primary goal of face detection in this pipeline is not real-time inference, but:

- maximizing the number of usable face crops for emotion labeling and training,

- handling crowded scenes with many small, partially occluded faces,

- preserving recall so that group emotion aggregation is not biased by missed individuals.

Therefore, high recall with controllable noise is preferred over conservative detection.

Empirical Results on Project Data

We evaluated both detectors on representative images from the dataset using identical post-processing and quality filters (minimum face size and blur threshold). Observed results: | Image | Detector | Total Faces | Usable Faces | | ------- | ----------- | ----------- | ------------ | | Image 1 | RetinaFace | 29 | 31 | | Image 1 | YOLOv8-face | 24 | 27 | | Image 2 | RetinaFace | 5 | 5 | | Image 2 | YOLOv8-face | 5 | 5 |

While YOLOv8-face produced a higher usable percentage in one image due to its conservative behavior, RetinaFace consistently produced a higher absolute number of usable faces across images.

For group-level analysis, absolute usable face count is the more critical metric.

Recall vs Precision Trade-off The detectors exhibit different design philosophies:

- YOLOv8-face prioritizes precision, yielding fewer detections but a higher fraction of clean crops.

- RetinaFace prioritizes recall, detecting more faces including small and moderately blurred ones. For this project:

- Missed faces cannot be recovered downstream.

- Extra detections can be filtered, down-weighted, or excluded using quality metrics. Thus, high recall with explicit quality control is the safer and more flexible strategy.

Robustness to Crowded and Low-Quality Scenes RetinaFace is specifically designed for:

- multi-scale face detection,

- dense crowd scenarios,

- small and partially occluded faces.

These properties are critical in real-world group images, where:

- face sizes vary dramatically,

- blur and pose are common,

- group emotion should reflect as many participants as possible.

YOLOv8-face performed well on medium-to-large faces but missed a non-trivial number of small yet usable faces in challenging scenes.

Compatibility with Downstream Emotion Modeling

The pipeline explicitly incorporates:

- face quality scoring (blur, size),

- selective labeling in Label Studio,

- quality-aware group aggregation.

This makes RetinaFace’s higher recall an advantage rather than a liability, since noisy detections are handled explicitly, not ignored.

Final Decision

RetinaFace was selected as the primary face detector for this project because it:

- Produces a higher number of usable face crops per image

- Maintains robustness in crowded, real-world scenes

- Aligns better with group-level emotion analysis

- Allows principled downstream filtering and weighting

- Is widely validated in face analysis research

!pip -q install --upgrade "protobuf>=6.31.1,<7"

!pip -q install deepface opencv-python-headless google-cloud-storage tqdm

import os, csv, uuid, time

import cv2

import numpy as np

from tqdm import tqdm

from google.cloud import storage

from deepface import DeepFace

BUCKET_NAME = "ranjana-group-emotion-data"

SRC_PREFIX = "group_emotion_data" # images live here (end with /)

OUT_PREFIX = "group_emotion_out/retinaface_v1" # output root

CROPS_PREFIX = f"{OUT_PREFIX}/face_crops"

META_PREFIX = f"{OUT_PREFIX}/metadata"

- Blur score estimates sharpness using Laplacian varianca

- Clamp box keeps bounding boxes within image boundaries

#Helper functions (blur score, safe crop, GCS upload)

def blur_score_laplacian(gray_crop: np.ndarray) -> float:

# Higher means sharper

return float(cv2.Laplacian(gray_crop, cv2.CV_64F).var())

def clamp_box(x, y, w, h, W, H):

x = max(0, int(x)); y = max(0, int(y))

w = max(0, int(w)); h = max(0, int(h))

x2 = min(W, x + w); y2 = min(H, y + h)

w = max(0, x2 - x); h = max(0, y2 - y)

return x, y, w, h

# Batch extractor

client = storage.Client()

bucket = client.bucket(BUCKET_NAME)

# Collect image blobs (safe, no gsutil ls -r)

image_blobs = [

b for b in client.list_blobs(BUCKET_NAME, prefix=SRC_PREFIX)

if b.name.lower().endswith((".jpg", ".jpeg", ".png", ".webp"))

]

print("Total source images:", len(image_blobs))

assert len(image_blobs) > 0, "No images found under SRC_PREFIX."

LOCAL_META = "/content/faces_metadata.csv"

tmp_img = "/content/tmp_image"

# Write CSV header once

fieldnames = [

"source_blob",

"source_filename",

"face_index",

"x","y","w","h",

"min_side",

"blur_score",

"detector_confidence",

"crop_blob",

"crop_gcs_uri",

]

with open(LOCAL_META, "w", newline="") as f:

writer = csv.DictWriter(f, fieldnames=fieldnames)

writer.writeheader()

total_faces = 0

failed_images = 0

for blob in tqdm(image_blobs[:20], desc="Extracting faces"):

try:

# Download image to local

local_path = tmp_img + os.path.splitext(blob.name)[1].lower()

blob.download_to_filename(local_path)

img_bgr = cv2.imread(local_path)

if img_bgr is None:

failed_images += 1

continue

H, W = img_bgr.shape[:2]

# RetinaFace detection + aligned face crop from DeepFace

faces = DeepFace.extract_faces(

img_path=local_path,

detector_backend="retinaface",

enforce_detection=False,

align=True

)

# Append metadata rows and upload crops

rows = []

for i, fdict in enumerate(faces):

area = fdict.get("facial_area", None)

face_rgb = fdict.get("face", None)

conf = fdict.get("confidence", None)

if area is None or face_rgb is None:

continue

x, y, w, h = area["x"], area["y"], area["w"], area["h"]

x, y, w, h = clamp_box(x, y, w, h, W, H)

if w == 0 or h == 0:

continue

min_side = int(min(w, h))

# face_rgb may be float in [0,1] depending on backend

if face_rgb.dtype != np.uint8:

face_rgb = (face_rgb * 255.0).clip(0, 255).astype(np.uint8)

face_bgr = cv2.cvtColor(face_rgb, cv2.COLOR_RGB2BGR)

gray = cv2.cvtColor(face_bgr, cv2.COLOR_BGR2GRAY)

bscore = blur_score_laplacian(gray)

# Create a stable-ish crop name

src_base = os.path.splitext(os.path.basename(blob.name))[0]

crop_name = f"{src_base}/face_{i:03d}_{uuid.uuid4().hex[:8]}.jpg"

crop_blob_name = f"{CROPS_PREFIX}/{crop_name}"

crop_gcs_uri = f"gs://{BUCKET_NAME}/{crop_blob_name}"

# Save crop locally then upload

local_crop = f"/content/crop_{uuid.uuid4().hex}.jpg"

cv2.imwrite(local_crop, face_bgr, [int(cv2.IMWRITE_JPEG_QUALITY), 95])

bucket.blob(crop_blob_name).upload_from_filename(local_crop)

os.remove(local_crop)

rows.append({

"source_blob": blob.name,

"source_filename": os.path.basename(blob.name),

"face_index": i,

"x": x, "y": y, "w": w, "h": h,

"min_side": min_side,

"blur_score": round(bscore, 3),

"detector_confidence": None if conf is None else round(float(conf), 4),

"crop_blob": crop_blob_name,

"crop_gcs_uri": crop_gcs_uri,

})

# Append rows to CSV

if rows:

with open(LOCAL_META, "a", newline="") as f:

writer = csv.DictWriter(f, fieldnames=fieldnames)

writer.writerows(rows)

total_faces += len(rows)

except Exception as e:

failed_images += 1

# Keep going; log minimal info

print("Failed on:", blob.name, "|", type(e).__name__, str(e)[:160])

print("Done.")

print("Total faces saved:", total_faces)

print("Failed images:", failed_images)

print("Local metadata:", LOCAL_META)

meta_blob_name = f"{META_PREFIX}/faces_metadata.csv"

bucket.blob(meta_blob_name).upload_from_filename(LOCAL_META)

print("Uploaded metadata to:")

print(f"gs://{BUCKET_NAME}/{meta_blob_name}")

print("Crops under:")

print(f"gs://{BUCKET_NAME}/{CROPS_PREFIX}/")

Pilot : Analyze the face crops for 20 images first¶

BUCKET_NAME = "ranjana-group-emotion-data"

META_BLOB = "group_emotion_out/retinaface_v1/metadata/faces_metadata.csv" # <-- your actual path

!pip -q install pandas

import pandas as pd

from google.cloud import storage

client = storage.Client()

bucket = client.bucket(BUCKET_NAME)

local_meta = "/content/faces_metadata.csv"

bucket.blob(META_BLOB).download_to_filename(local_meta)

df = pd.read_csv(local_meta)

df.head(), df.shape

print("Total face crops:", len(df))

print("Unique source images:", df["source_blob"].nunique())

print("\nmin_side summary:")

print(df["min_side"].describe())

print("\nblur_score summary:")

print(df["blur_score"].describe())

Why min_side and blur_score Matter for Face Emotion Recognition¶

After extracting face crops from group images, not all detected faces are equally useful for facial emotion recognition (FER). Faces in crowded scenes vary significantly in size, sharpness, occlusion, and pose. Before labeling or training a model, it is therefore essential to characterize the quality of each face crop.

In this cell, we examine two complementary quality indicators: face size (min_side) and image sharpness (blur_score).

- Face Size (min_side)

The variable min_side is defined as:

the minimum of the width and height of the face bounding box (in pixels)

This quantity serves as a proxy for the effective spatial resolution of facial features.

Why face size matters

Facial emotion recognition depends on subtle cues such as:

. mouth curvature

. eye openness

. eyebrow tension

. nasolabial folds

When a face is too small:

. these cues collapse into very few pixels

. upsampling introduces artifacts rather than information

. even human annotators struggle to assign a confident emotion label

Empirically and in prior FER datasets (e.g., FER2013, AffectNet), faces below roughly 20–30 pixels on the short side are unreliable for emotion analysis.

Using min_side (rather than area or max side) ensures that:

. both dimensions are sufficiently resolved

. extremely thin or degenerate bounding boxes are penalized

- Blur Score (blur_score)

The blur_score is computed using the variance of the Laplacian, a standard measure of high-frequency content in an image.

Intuitively:

. high blur score → more edges and fine detail

. low blur score → smoother, blurrier image

Why sharpness matters

Emotion recognition relies on crisp visibility of:

. eye contours

. mouth edges

. facial muscle boundaries

Motion blur, defocus, or heavy compression can obscure these cues, reducing both human labeling accuracy and model performance.

- Limitations of Blur Score in Crowded Scenes

Importantly, blur score alone is not a reliable indicator of emotion usability, especially in group images.

In crowded scenes:

. hair, clothing, and background texture contribute strong edges

. small faces can have artificially high blur scores

. a face may be “sharp” in a signal-processing sense but still unreadable semantically

For this reason, blur score is treated as a weak, supporting signal, not a decisive criterion.

- Why Both Metrics Are Needed Together

Face size and blur capture different failure modes:

| Metric | Detects | Misses |

|---|---|---|

min_side |

insufficient resolution | blur / motion |

blur_score |

defocus / motion blur | semantic clarity, face size |

By inspecting both distributions together, we can:

understand the range of face quality in the dataset

avoid premature filtering

design a principled composite quality score later in the pipeline

- Purpose of This Analysis Cell

This cell does not filter data yet.

Instead, it:

. provides empirical insight into face crop quality

. motivates the need for soft quality scoring rather than hard thresholds

. informs later decisions on labeling prioritization and training data selection

In other words, this analysis step ensures that data quality decisions are evidence-based rather than arbitrary.

Key takeaway

Face emotion recognition performance is strongly influenced by face resolution and sharpness. Examining min_side and blur_score distributions allows us to characterize the usability of detected faces and motivates the use of a composite, soft quality score in subsequent stages.import matplotlib.pyplot as plt

plt.figure(figsize=(8,5))

plt.hist(df["min_side"], bins=50)

plt.title("Distribution of min_side (px)")

plt.xlabel("min_side (px)")

plt.ylabel("count")

plt.show()

plt.figure(figsize=(8,5))

plt.hist(df["blur_score"], bins=50)

plt.title("Distribution of blur_score (Laplacian variance)")

plt.xlabel("blur_score")

plt.ylabel("count")

plt.show()

Observations from the Blur Score Distribution¶

The blur_score distribution is highly right-skewed, with the majority of faces clustered at low to moderate blur scores and a long tail extending to very high values.

Most faces fall within a relatively narrow blur range near the lower end, indicating that extreme blur is uncommon, but moderate blur is widespread.

A small number of faces exhibit very high blur scores (outliers). These are likely caused by strong edge responses from background texture, lighting artifacts, or high-contrast regions rather than truly sharp facial details.

There is no clear separation point in the blur score histogram that would naturally divide “usable” and “unusable” faces, suggesting that blur alone cannot serve as a reliable filtering criterion.

Observations from the Min Side Distribution¶

The min_side distribution is strongly right-skewed, with a clear concentration of faces at small sizes (roughly 20–60 px).

This indicates that most detected faces are small, consistent with crowded group images where many individuals are far from the camera.

The number of faces decreases rapidly as

min_sideincreases, with only a small fraction of large, high-resolution faces forming a long tail.Faces with very large

min_sidevalues (e.g., >150 px) are rare, implying that foreground faces represent a minority of the dataset.

Joint Interpretation¶

The dataset is dominated by small faces, many of which may have acceptable blur scores but still lack sufficient spatial resolution for reliable emotion recognition.

The presence of high blur scores among predominantly small faces reinforces that numerical sharpness does not guarantee semantic usefulness.

Overall, the plots show that face size is the primary limiting factor, while blur acts as a secondary, noisier indicator of quality.

How Many Faces Are Retained Under Different Quality Filters¶

This cell explores how many extracted face crops would be retained under

different simple quality filtering criteria, based on face size

(min_side) and image sharpness (blur_score).

The goal of this analysis is not to decide final filtering rules, but to understand how sensitive data retention is to different quality thresholds before labeling or training.

What this cell computes¶

For a range of candidate thresholds, the cell computes:

- the number of faces that satisfy a minimum face size requirement

(

min_side ≥ threshold) - the number of faces that also satisfy a minimum sharpness requirement

(

blur_score ≥ threshold) - the fraction of the total dataset that would remain under each setting

The output therefore reflects retention rates, not final inclusion decisions.

Interpretation of the sharpness metric (blur_score)¶

The blur_score is computed as the variance of the Laplacian, a standard

image-processing measure of high-frequency content. In this formulation:

- lower values correspond to blurrier or smoother images

- higher values correspond to sharper images with stronger edge responses

Although referred to as blur_score for historical reasons, this quantity

functions as a sharpness proxy, and is therefore thresholded using

blur_score ≥ min_sharpness in this analysis.

Observations enabled by this analysis¶

Increasing the

min_sidethreshold results in a rapid drop in retained faces, reflecting the fact that most faces in group images are small.Increasing the sharpness threshold further reduces retention, but its effect is generally secondary to face size, indicating that resolution is the dominant limiting factor.

No single combination of size and sharpness thresholds preserves a large fraction of faces while guaranteeing high visual quality.

This highlights a fundamental trade-off: aggressive hard filtering improves average quality but significantly reduces coverage of individuals in the scene.

Why this cell does not filter data yet¶

This analysis is diagnostic, not prescriptive.

Hard thresholds are intentionally explored here to:

- make the cost of filtering explicit

- reveal how brittle binary decisions can be in crowded scenes

- motivate a softer notion of face quality

Rather than discarding faces outright, subsequent stages treat quality as a continuous spectrum, enabling prioritization, weighting, and adaptive use of face crops.

Key takeaway¶

Simple size and sharpness thresholds can dramatically reduce data retention in crowded group images. Understanding this sensitivity motivates the use of a continuous, composite face quality score rather than hard filtering.

# How many faces you keep under different quality filters (for labeling/training later).

def usable_rate(min_side_thr, blur_thr):

usable = df[(df["min_side"] >= min_side_thr) & (df["blur_score"] >= blur_thr)]

return len(usable), len(usable)/max(len(df), 1)

for ms in [24, 32, 40]:

for bt in [30, 50, 80]:

n, r = usable_rate(ms, bt)

print(f"min_side>={ms}, blur>={bt}: usable={n}/{len(df)} ({r:.2%})")

import numpy as np

import matplotlib.pyplot as plt

N = len(df)

min_side_grid = np.arange(16, 97, 4) # 16,20,...,96

sharp_grid = np.arange(0, 401, 25) # 0,25,...,400 (blur_score is sharpness)

def usable_pct(min_side_thr, sharp_thr):

usable = df[(df["min_side"] >= min_side_thr) & (df["blur_score"] >= sharp_thr)]

return 100.0 * len(usable) / max(N, 1)

# 1) Usable % vs min_side for a few sharpness thresholds

plt.figure(figsize=(9,5))

for sharp_thr in [0, 50, 100, 200]:

y = [usable_pct(ms, sharp_thr) for ms in min_side_grid]

plt.plot(min_side_grid, y, marker="o", label=f"sharpness ≥ {sharp_thr}")

plt.title("Usable % vs min_side threshold (for several sharpness thresholds)")

plt.xlabel("min_side threshold (px)")

plt.ylabel("usable faces (%)")

plt.legend()

plt.show()

# 2) Usable % vs sharpness for a few min_side thresholds

plt.figure(figsize=(9,5))

for ms in [16, 24, 32, 48]:

y = [usable_pct(ms, s) for s in sharp_grid]

plt.plot(sharp_grid, y, marker="o", label=f"min_side ≥ {ms}px")

plt.title("Usable % vs sharpness threshold (for several min_side thresholds)")

plt.xlabel("sharpness threshold (Laplacian variance)")

plt.ylabel("usable faces (%)")

plt.legend()

plt.show()

import numpy as np

import pandas as pd

min_side_grid = [16, 20, 24, 28, 32, 40, 48, 64]

sharp_grid = [0, 25, 50, 80, 120, 200, 300]

N = len(df)

def usable_pct(min_side_thr, sharp_thr):

usable = df[(df["min_side"] >= min_side_thr) & (df["blur_score"] >= sharp_thr)]

return 100.0 * len(usable) / max(N, 1)

heat = pd.DataFrame(

[[usable_pct(ms, s) for s in sharp_grid] for ms in min_side_grid],

index=[f"min≥{ms}" for ms in min_side_grid],

columns=[f"sharp≥{s}" for s in sharp_grid]

)

heat

import matplotlib.pyplot as plt

plt.figure(figsize=(10,4))

plt.imshow(heat.values, aspect="auto")

plt.xticks(range(len(heat.columns)), heat.columns, rotation=45, ha="right")

plt.yticks(range(len(heat.index)), heat.index)

plt.title("Usable faces (%) by min_side and sharpness thresholds")

plt.xlabel("Sharpness threshold")

plt.ylabel("Min-side threshold")

plt.colorbar(label="usable %")

plt.tight_layout()

plt.show()

Observations from the Quality Threshold Sensitivity Plots¶

1. Usable Faces vs min_side Threshold¶

The usable face percentage decreases monotonically and steeply as the

min_sidethreshold increases across all sharpness settings.For low

min_sidethresholds (≈16–24 px), a large fraction of faces is retained (>80%), while increasing the threshold toward larger values (>80 px) reduces retention to below ~20%.Curves corresponding to different sharpness thresholds are approximately parallel, indicating that face size dominates retention behavior independently of sharpness constraints.

This demonstrates that face size is the primary limiting factor in crowded group images.

2. Usable Faces vs Sharpness Threshold¶

For a fixed

min_side, increasing the sharpness threshold leads to a gradual and smooth decline in usable faces.The impact of sharpness filtering is less severe than size filtering, particularly at smaller face sizes.

Larger

min_sidethresholds amplify the effect of sharpness constraints, but even then, the decline remains continuous rather than abrupt.This suggests that sharpness is a secondary, refining factor rather than a decisive gate for usability.

3. Joint Effect of min_side and Sharpness (Heatmap)¶

The heatmap reveals a smooth gradient from high retention (low thresholds) to low retention (high thresholds), with no sharp boundaries.

There is no clear threshold combination that simultaneously preserves a high percentage of faces while enforcing strict quality constraints.

Retention decreases continuously as either face size or sharpness requirements become more restrictive.

Overall Interpretation¶

The dataset is highly sensitive to

min_sidethresholds, confirming that most detected faces are small and that hard size filtering rapidly reduces coverage.Sharpness thresholds influence usability more gently and act as a continuous modifier rather than a binary filter.

The absence of natural cutoff points across all three plots indicates that hard thresholding is brittle and inevitably trades coverage for quality.

These observations motivate treating face quality as a continuous spectrum rather than applying strict inclusion/exclusion rules.

# Assign discrete quality bins based on face size and sharpness

# blur_score is Laplacian variance (higher = sharper)

def assign_quality_bin(row):

if row["min_side"] >= 48 and row["blur_score"] >= 100:

return "high"

elif row["min_side"] >= 24 and row["blur_score"] >= 50:

return "mid"

else:

return "low"

df["quality_bin"] = df.apply(assign_quality_bin, axis=1)

# Inspect distribution

bin_counts = df["quality_bin"].value_counts()

bin_percent = df["quality_bin"].value_counts(normalize=True) * 100

bin_summary = (

pd.DataFrame({

"count": bin_counts,

"percent (%)": bin_percent.round(2)

})

.sort_index()

)

bin_summary

df.groupby("quality_bin")[["min_side", "blur_score"]].describe()

Discrete Face Quality Binning¶

Based on the sensitivity analysis of face size (min_side) and sharpness

(blur_score), faces are grouped into three discrete quality bins:

high, mid, and low quality.

This binning step complements the later composite quality score by providing a human-interpretable categorization of face usability.

Rationale¶

The earlier threshold sweeps and visualizations show that:

- Face quality varies continuously rather than exhibiting natural cutoff points

- Face size dominates usability, with sharpness acting as a secondary modifier

- Hard filtering would discard a large fraction of individuals in group scenes

To balance data coverage, annotation effort, and interpretability, faces are assigned to coarse quality tiers rather than being removed outright.

Quality Bin Definitions¶

High-quality faces

- Large enough to preserve facial detail

- Sufficiently sharp for confident emotion annotation

- Typically foreground individuals

Criteria:

min_side ≥ 48 px AND blur_score ≥ 100

Mid-quality faces

- Facial structure is visible but resolution or sharpness is limited

- Emotion annotation may carry moderate uncertainty

- Important for robustness and generalization

Criteria:

24 ≤ min_side < 48 px AND blur_score ≥ 50

Low-quality faces

- Small, blurred, or noisy

- Emotion cues are ambiguous

- Represent individuals present in the group but are difficult to label reliably

Criteria:

min_side < 24 px OR blur_score < 50

Why Binning Is Used¶

Quality binning serves several purposes:

- Prioritizes high-quality faces for annotation

- Enables stratified sampling in labeling workflows

- Improves interpretability and debugging

- Allows controlled experiments across quality tiers

Rather than discarding low-quality faces, binning preserves group composition while explicitly modeling uncertainty.

Relationship to Composite Quality Score¶

Quality bins provide coarse, interpretable categories, while the composite quality score provides fine-grained weighting within and across bins.

The two mechanisms are complementary:

- Bins support annotation strategy and analysis

- Composite scores support ranking, weighting, and aggregation

CROPS_PREFIX = "group_emotion_out/retinaface_v1/face_crops"

# Cell 79 (updated): visualize face crops by quality bin (crop_blob points to GCS object path)

from google.cloud import storage

import numpy as np

import cv2

# Preconditions

assert "crop_blob" in df.columns, "Expected df to have a 'crop_blob' column."

assert "quality_bin" in df.columns, "Run the quality binning cell before this."

client = storage.Client()

bucket = client.bucket(BUCKET_NAME)

def load_rgb_from_gcs_blob(gs_uri: str):

"""Download an image from GCS and decode into RGB (numpy array)."""

# Extract bucket name and blob name from the full GCS URI

parts = gs_uri.replace("gs://", "").split("/", 1)

bucket_name = parts[0]

blob_name = parts[1]

# Use the client to get the correct bucket object

local_bucket = client.bucket(bucket_name)

data = local_bucket.blob(blob_name).download_as_bytes()

arr = np.frombuffer(data, np.uint8)

bgr = cv2.imdecode(arr, cv2.IMREAD_COLOR)

if bgr is None:

return None

return cv2.cvtColor(bgr, cv2.COLOR_BGR2RGB)

def save_rgb_to_gcs(rgb: np.ndarray, gs_uri: str) -> None:

"""Upload an RGB numpy image to GCS."""

bucket_name, blob_name = gs_uri.replace("gs://", "").split("/", 1)

# Re-initialize bucket in case the client is from an earlier context without current project scope

local_bucket = client.bucket(bucket_name)

blob = local_bucket.blob(blob_name)

# Convert RGB to BGR for OpenCV imencode

bgr = cv2.cvtColor(rgb, cv2.COLOR_RGB2BGR)

_, img_encoded = cv2.imencode('.jpg', bgr)

blob.upload_from_string(img_encoded.tobytes(), content_type='image/jpeg')

import matplotlib.pyplot as plt

import pandas as pd

import math

def show_random_faces_by_bin(df, bin_name: str, n=12, seed=7):

d = df[df["quality_bin"] == bin_name].copy()

if len(d) == 0:

print(f"No samples found for quality_bin='{bin_name}'.")

return

samples = d.sample(n=min(n, len(d)), random_state=seed)

cols = 6

rows = math.ceil(len(samples) / cols)

plt.figure(figsize=(cols * 3, rows * 3))

for i, (_, row) in enumerate(samples.iterrows(), start=1):

img = load_rgb_from_gcs_blob(row["crop_blob"])

ax = plt.subplot(rows, cols, i)

ax.axis("off")

if img is None:

ax.set_title("Failed load", fontsize=9)

continue

ax.imshow(img)

# Titles: min_side, blur_score (sharpness proxy), and quality_score if available

ms = int(row["min_side"]) if "min_side" in row and pd.notna(row["min_side"]) else None

bs = float(row["blur_score"]) if "blur_score" in row and pd.notna(row["blur_score"]) else None

qs = float(row["quality_score"]) if "quality_score" in row and pd.notna(row["quality_score"]) else None

parts = []

if ms is not None: parts.append(f"ms={ms}")

if bs is not None: parts.append(f"sharp={bs:.0f}") # blur_score is Laplacian variance (higher = sharper)

if qs is not None: parts.append(f"q={qs:.2f}")

ax.set_title(", ".join(parts), fontsize=9)

plt.suptitle(f"Random face crops: quality_bin = {bin_name}", fontsize=14)

plt.tight_layout()

plt.show()

# Visual audit per bin

show_random_faces_by_bin(df, "high", n=12, seed=7)

show_random_faces_by_bin(df, "mid", n=12, seed=7)

show_random_faces_by_bin(df, "low", n=12, seed=7)

import matplotlib.pyplot as plt

import pandas as pd

import numpy as np

import math

assert "source_blob" in df.columns, "Expected df to contain 'source_blob' (original image identifier)."

def sample_equal_per_source(df_bin: pd.DataFrame, k_per_source=2, seed=7):

"""

Sample up to k_per_source faces per source image.

This prevents a single crowded image from dominating the sample grid.

"""

rng = np.random.default_rng(seed)

out_rows = []

# Shuffle sources for variety

sources = df_bin["source_blob"].dropna().unique().tolist()

rng.shuffle(sources)

for src in sources:

g = df_bin[df_bin["source_blob"] == src]

if len(g) == 0:

continue

take = min(k_per_source, len(g))

out_rows.append(g.sample(n=take, random_state=seed))

if not out_rows:

return df_bin.head(0)

return pd.concat(out_rows, axis=0).reset_index(drop=True)

def show_balanced_faces_by_bin(df, bin_name: str, k_per_source=2, max_faces=36, seed=7):

d = df[df["quality_bin"] == bin_name].copy()

if len(d) == 0:

print(f"No samples found for quality_bin='{bin_name}'.")

return

balanced = sample_equal_per_source(d, k_per_source=k_per_source, seed=seed)

# Cap total faces shown to keep grids readable

if len(balanced) > max_faces:

balanced = balanced.sample(n=max_faces, random_state=seed)

cols = 6

rows = math.ceil(len(balanced) / cols) if len(balanced) else 1

plt.figure(figsize=(cols * 3, rows * 3))

for i, row in enumerate(balanced.itertuples(index=False), start=1):

img = load_rgb_from_gcs_blob(row.crop_blob)

ax = plt.subplot(rows, cols, i)

ax.axis("off")

if img is None:

ax.set_title("Failed load", fontsize=9)

continue

ax.imshow(img)

# Display key metadata under each crop

parts = []

if hasattr(row, "min_side") and pd.notna(row.min_side):

parts.append(f"ms={int(row.min_side)}")

if hasattr(row, "blur_score") and pd.notna(row.blur_score):

parts.append(f"sharp={float(row.blur_score):.0f}") # Laplacian variance (higher=sharper)

if hasattr(row, "quality_score") and pd.notna(row.quality_score):

parts.append(f"q={float(row.quality_score):.2f}")

ax.set_title(", ".join(parts), fontsize=9)

plt.suptitle(

f"Balanced sample: quality_bin={bin_name} (≤{k_per_source} faces/source, max {len(balanced)} faces)",

fontsize=14

)

plt.tight_layout()

plt.show()

# Balanced visual audit per bin

show_balanced_faces_by_bin(df, "high", k_per_source=2, max_faces=36, seed=7)

show_balanced_faces_by_bin(df, "mid", k_per_source=2, max_faces=36, seed=7)

show_balanced_faces_by_bin(df, "low", k_per_source=2, max_faces=36, seed=7)

df.head()

Matplotlib fits all face_crops to a grid. This is the reason that some of the pictures with a high blur score appear unclear, because their min_size is small actually, but it was scaled to fit to the display plot size.

Composite Face Quality Score¶

# Reduces sensitivity to outliers and stabilizes scores across datasets.

def robust_norm(x, p_low=5, p_high=95):

lo, hi = np.percentile(x, [p_low, p_high])

return np.clip((x - lo) / (hi - lo + 1e-6), 0, 1)

df["size_norm"] = robust_norm(df["min_side"])

df["sharp_norm"] = robust_norm(df["blur_score"])

# Non linear Compression helps to dampen extremes.

# Justification, doubling resolution did not double usefulness.

size_term = np.sqrt(df["size_norm"])

sharp_term = np.sqrt(df["sharp_norm"])

# size >> sharpness

# weights grounded on sensitivuty plots

df["quality_score"] = 0.7 * size_term + 0.3 * sharp_term

In addition to discrete quality bins, a continuous face quality score is defined to support ranking, weighting, and aggregation of faces in downstream stages.

The score combines two interpretable signals:

- face size (

min_side) - image sharpness (

blur_score, Laplacian variance)

Unlike hard thresholds, the composite score treats quality as a continuous spectrum and avoids discarding faces outright.

Design Rationale¶

Empirical analysis shows that:

- face size is the dominant driver of usability

- sharpness refines quality but is noisy, especially for small faces

- extreme values should not dominate the score

Accordingly, both signals are robustly normalized using percentiles and combined with unequal weights that reflect their relative importance.

Score Definition¶

- Robust percentile-based normalization is applied to both signals.

- A nonlinear compression reduces sensitivity to extreme values.

- A weighted sum emphasizes face size over sharpness.

The resulting score lies in ([0, 1]), with higher values indicating higher expected usability.

Relationship to Quality Bins¶

Quality bins provide coarse, interpretable categories for annotation and analysis, while the composite score enables fine-grained weighting and ranking.

The two mechanisms are complementary:

- bins guide human workflows

- the score supports algorithmic aggregation and modeling

df.head()

# Persist updated face metadata with quality information

# Uses the existing project layout exactly as provided

BUCKET_NAME = "ranjana-group-emotion-data"

META_BLOB = "group_emotion_out/retinaface_v1/metadata/faces_metadata.csv"

OUT_META_BLOB = "group_emotion_out/retinaface_v1/metadata/faces_metadata_with_quality.csv"

from google.cloud import storage

import io

client = storage.Client()

bucket = client.bucket(BUCKET_NAME)

# Save dataframe to an in-memory CSV buffer

csv_buffer = io.StringIO()

df.to_csv(csv_buffer, index=False)

# Upload to GCS

blob = bucket.blob(OUT_META_BLOB)

blob.upload_from_string(

csv_buffer.getvalue(),

content_type="text/csv"

)

print(f"Saved updated metadata to: gs://{BUCKET_NAME}/{OUT_META_BLOB}")

Persisting Face Metadata with Quality Annotations¶

The original face metadata extracted using RetinaFace is stored as a CSV file containing detection geometry and crop references.

After computing face size metrics, sharpness measures, quality bins, and a composite quality score, the enriched metadata is persisted as a new CSV file using the same project layout.

This preserves the original metadata while creating a versioned artifact that can be used for labeling, training, and group-level emotion analysis without re-running face detection.

BUCKET_NAME = "ranjana-group-emotion-data"

META_BLOB = "group_emotion_out/retinaface_v1/metadata/faces_metadata.csv" # your current metadata path

OUT_META_BLOB = "group_emotion_out/retinaface_v1/metadata/faces_metadata_with_quality.csv"

!pip -q install pandas

import pandas as pd

from google.cloud import storage

client = storage.Client()

bucket = client.bucket(BUCKET_NAME)

local_meta = "/content/faces_metadata.csv"

bucket.blob(OUT_META_BLOB).download_to_filename(local_meta)

df = pd.read_csv(local_meta)

print("Loaded:", df.shape)

df.head()

# sql_engine: bigquery

# output_variable: df

# start _sql

_sql = """

""" # end _sql

from google.colab.sql import bigquery as _bqsqlcell

df = _bqsqlcell.run(_sql)

df

Analysis of Face Quality Signals and Composite Score¶

This section analyzes the relationships between individual quality signals, their normalized forms, the composite quality score, and the discrete quality bins.

Correlation Structure¶

import seaborn as sns

import matplotlib.pyplot as plt

analysis_cols = [

"min_side",

"blur_score",

"size_norm",

"sharp_norm",

"quality_score"

]

corr = df[analysis_cols].corr()

plt.figure(figsize=(6,5))

sns.heatmap(corr, annot=True, cmap="coolwarm", fmt=".2f")

plt.title("Correlation Between Face Quality Signals")

plt.show()

Correlation analysis shows that the composite quality score is strongly

correlated with normalized face size (size_norm) and moderately correlated

with raw face size (min_side). In contrast, correlations with sharpness

(blur_score) are weak after normalization.

This confirms that face size is the dominant and most reliable contributor to face usability, while sharpness acts as a secondary, refining signal.

Quality Score vs Face Size and Sharpness¶

fig, axes = plt.subplots(1, 2, figsize=(12,4))

axes[0].scatter(df["min_side"], df["quality_score"], alpha=0.4, s=10)

axes[0].set_xlabel("min_side (px)")

axes[0].set_ylabel("quality_score")

axes[0].set_title("Quality Score vs Face Size")

axes[1].scatter(df["blur_score"], df["quality_score"], alpha=0.4, s=10)

axes[1].set_xlabel("blur_score (sharpness)")

axes[1].set_ylabel("quality_score")

axes[1].set_title("Quality Score vs Sharpness")

plt.tight_layout()

plt.show()

The quality score exhibits a clear monotonic relationship with face size, indicating that larger faces consistently yield higher usability. The relationship saturates at larger sizes, reflecting diminishing returns and confirming that nonlinear scaling prevents oversized faces from dominating the score.

The relationship between quality score and sharpness is present but noisy. Highly sharp faces do not automatically receive high quality scores, especially when face size is limited. This behavior is desirable and confirms that the composite score is robust to texture artifacts and background edges.

Alignment with Quality Bins¶

plt.figure(figsize=(6,4))

sns.boxplot(

data=df,

x="quality_bin",

y="quality_score",

order=["low", "mid", "high"]

)

plt.title("Quality Score Distribution by Quality Bin")

plt.xlabel("quality_bin")

plt.ylabel("quality_score")

plt.show()

Quality score distributions increase systematically from low to mid to high quality bins, with partial overlap between bins. This demonstrates that bins and the composite score are consistent yet complementary: bins provide interpretable categories, while the score captures continuous variation within each bin.

Face Crop Geometry¶

df["aspect_ratio"] = df["w"] / df["h"]

plt.figure(figsize=(6,4))

plt.hist(df["aspect_ratio"], bins=40)

plt.title("Distribution of Face Crop Aspect Ratios")

plt.xlabel("width / height")

plt.ylabel("count")

plt.show()

The distribution of face crop aspect ratios is tightly concentrated, indicating consistent cropping behavior. This supports downstream resizing and model training without requiring additional geometric normalization.

Summary¶

Overall, the composite quality score behaves as intended: it reflects dominant face size effects, incorporates sharpness conservatively, avoids extreme saturation, and aligns well with discrete quality bins. These properties make it suitable for ranking, weighting, and aggregation in downstream emotion recognition tasks.

Distribution of the Composite Face Quality Score¶

This section examines the standalone distribution of the composite face quality score using both a histogram (count-based view) and a kernel density estimate (KDE) (smooth distributional view). Together, these visualizations provide a complete picture of how face quality is distributed across the dataset and serve as a numerical sanity check before the score is used in downstream tasks.

plt.figure(figsize=(6,4))

sns.histplot(

df["quality_score"],

bins=30,

stat="density",

alpha=0.3

)

sns.kdeplot(

df["quality_score"],

clip=(0,1)

)

plt.title("Histogram + KDE of Composite Quality Score")

plt.xlabel("quality_score (0 to 1)")

plt.ylabel("density")

plt.show()

Histogram + KDE: Smooth Distributional View¶

Overlaying a kernel density estimate (KDE) on the histogram provides a smooth, bin-independent view of the same distribution.

From the KDE, we observe:

- A single dominant mode centered in the mid-quality range, indicating that the quality score behaves as a continuous latent variable rather than forming discrete clusters.

- A right-skewed shape, with probability mass gradually decreasing toward higher quality values. This aligns with expectations for group imagery, where only a subset of faces are large, frontal, and sharply resolved.

- Smooth tails on both ends of the distribution, with no sharp spikes or abrupt cutoffs. This indicates that small changes in underlying signals (face size or sharpness) translate into gradual changes in the composite score.

The smoothness of the KDE confirms that the quality score is numerically stable and suitable for downstream operations that rely on continuity, such as ranking, weighting, or aggregation.

import matplotlib.pyplot as plt

plt.figure(figsize=(8,5))

plt.hist(df["quality_score"].dropna(), bins=50)

plt.title("Distribution of composite quality_score")

plt.xlabel("quality_score (0 to 1)")

plt.ylabel("count")

plt.show()

Histogram: Count-Based Perspective¶

The histogram shows the number of detected faces falling into different ranges of the composite quality score.

Several key observations emerge:

- The majority of faces lie in the low-to-mid quality range, roughly between 0.3 and 0.7. This reflects the natural composition of group images, where many faces are small, partially occluded, or captured at a distance.

- High-quality faces (scores above ~0.8) are present but relatively rare, forming a thin right tail of the distribution.

- Very low-quality faces exist but do not dominate the dataset, indicating that the pipeline does not collapse a large fraction of faces into unusable extremes.

- There is no excessive concentration at the boundaries (near 0 or 1), suggesting that the normalization and scaling steps prevent saturation.

The histogram confirms that the dataset contains a broad and realistic spectrum of face qualities rather than an artificially filtered or overly idealized collection.

Implications for Downstream Use¶

Taken together, these plots validate several important properties of the composite quality score:

- The score preserves dataset difficulty, rather than collapsing most faces into high-quality values.

- It avoids pathological saturation at extreme values.

- It behaves smoothly and continuously, making it appropriate as a soft weighting signal rather than a hard filtering criterion.

Importantly, this analysis is intended as a validation step only. The histogram and KDE are not used to define thresholds or bins; those decisions are handled separately using explicit size and sharpness criteria. Here, the goal is to confirm that the composite score is numerically well-behaved when considered on its own.

What analysis could be done later (but not now)

There are only two analyses worth revisiting in the future, and both depend on downstream results:

🔹 A. Error-aware analysis (post-model)

Once you train an emotion model:

compare misclassifications vs quality_score

ask: does quality explain errors?

➡️ This is evaluation, not preprocessing analysis.

🔹 B. Group-level weighting sensitivity

When aggregating group emotion:

compare unweighted vs quality-weighted aggregation

measure impact on group-level prediction stability

➡️ Again, downstream, not now.

BUCKET_NAME = "ranjana-group-emotion-data"

META_BLOB = "group_emotion_out/retinaface_v1/metadata/faces_metadata.csv" # your current metadata path

OUT_META_BLOB = "group_emotion_out/retinaface_v1/metadata/faces_metadata_with_quality.csv"

import pandas as pd

from google.cloud import storage

client = storage.Client()

bucket = client.bucket(BUCKET_NAME)

local_meta = "/content/faces_metadata.csv"

bucket.blob(OUT_META_BLOB).download_to_filename(local_meta)

df = pd.read_csv(local_meta)

print("Loaded:", df.shape)

df.head()

EMOTIONS = ["angry","disgust","fear","happy","sad","surprise","neutral"]

K = len(EMOTIONS)

# Placeholder: fill with None for now (later replaced by model outputs)

df["emotion_probs"] = None

At this stage, we have:

- face crops (

crop_blob) grouped bysource_blob - a continuous face reliability estimate (

quality_score) - but no true per-face emotion labels yet

To validate the aggregation logic before adding a face emotion model, we use mock per-face emotion probabilities on a real source image. This lets us verify that our aggregation behaves sensibly and that quality weighting changes the result in an interpretable way.

A ) Unweighted aggregation (baseline)

All faces contribute equally:

$$ P_{\text{group}}^{\text{unweighted}}(k) = \frac{1}{N}\sum_{i=1}^{N} P_i(k) $$

This baseline is useful, but in group scenes it can be dominated by many small, low-quality faces.

B ) Quality-weighted aggregation (proposed)

We incorporate face reliability using weights derived from quality_score:

$$ P_{\text{group}}^{\text{weighted}}(k) = \frac{\sum_{i=1}^{N} w_i \, P_i(k)}{\sum_{i=1}^{N} w_i} $$

This is a soft weighting strategy, not a hard filter: low-quality faces are not removed, but their influence is reduced.

C ) Per-face contribution analysis (interpretability)

To understand which faces drive the group prediction for a chosen target emotion $k$ (e.g., “happy”), we compute per-face contribution scores.

Unweighted contribution: $$ c_i^{\text{unweighted}}(k) = \frac{1}{N}P_i(k) $$

Weighted contribution: $$ c_i^{\text{weighted}}(k) = w_i P_i(k) $$

We then:

- compare group distributions (unweighted vs weighted),

- show top contributing faces side by side,

- show contribution histograms side by side.

These diagnostics demonstrate how quality weighting shifts influence away from unreliable faces and toward visually informative faces.

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

EMOTIONS = ["angry","disgust","fear","happy","sad","surprise","neutral"]

K = len(EMOTIONS)

emotion_to_idx = {e:i for i,e in enumerate(EMOTIONS)}

def weight_from_quality(q, eps=1e-6):

"""Convert quality_score in [0,1] into a nonnegative weight."""

q = float(q) if pd.notna(q) else 0.0

q = max(0.0, min(1.0, q))

return q + eps

def aggregate_probs(face_probs: np.ndarray, weights: np.ndarray = None) -> np.ndarray:

"""

face_probs: (N, K) rows sum to 1

weights: (N,) nonnegative or None

returns: (K,) sums to 1

"""

face_probs = np.asarray(face_probs, dtype=float)

assert face_probs.ndim == 2 and face_probs.shape[1] == K

if weights is None:

gp = face_probs.mean(axis=0)

else:

w = np.asarray(weights, dtype=float).reshape(-1)

assert len(w) == face_probs.shape[0]

w = np.clip(w, 0.0, None)

gp = (face_probs * w[:, None]).sum(axis=0) / (w.sum() + 1e-12)

gp = np.clip(gp, 0.0, None)

gp = gp / (gp.sum() + 1e-12)

return gp

def topk_emotions(group_probs: np.ndarray, k=3):

idx = np.argsort(group_probs)[::-1][:k]

return [(EMOTIONS[i], float(group_probs[i])) for i in idx]

Mock per-face emotion probabilities (temporary stand-in)¶

Until we attach real per-face emotion predictions, we create synthetic probability vectors for the faces from a real source image. The purpose is to validate the aggregation + contribution analysis pipeline.

We use scenarios that mimic typical group-image behavior:

uniform_noise: no dominant emotion signalcrowd_happy: a few high-quality faces strongly indicate “happy”mixed_signal: high-quality faces split across two emotions

def dirichlet_probs(alpha_vec, n, seed=0):

rng = np.random.default_rng(seed)

return rng.dirichlet(alpha=np.array(alpha_vec, dtype=float), size=n)

def make_mock_face_probs(df_img: pd.DataFrame, scenario="crowd_happy", seed=0):

n = len(df_img)

rng = np.random.default_rng(seed)

q = df_img["quality_score"].to_numpy()

thr = np.quantile(q, 0.80) if n >= 5 else (q.max() if n else 1.0)

strong = q >= thr

if scenario == "uniform_noise":

return dirichlet_probs([1.0]*K, n, seed=seed)

if scenario == "crowd_happy":

base = dirichlet_probs([1.2]*K, n, seed=seed)

alpha = [0.6]*K

alpha[emotion_to_idx["happy"]] = 12.0

base[strong] = dirichlet_probs(alpha, strong.sum(), seed=seed+1)

return base

if scenario == "mixed_signal":

base = dirichlet_probs([1.2]*K, n, seed=seed)

alpha_h = [0.6]*K; alpha_h[emotion_to_idx["happy"]] = 10.0

alpha_s = [0.6]*K; alpha_s[emotion_to_idx["surprise"]] = 10.0

strong_idx = np.where(strong)[0]

rng.shuffle(strong_idx)

half = len(strong_idx)//2

base[strong_idx[:half]] = dirichlet_probs(alpha_h, half, seed=seed+2)

base[strong_idx[half:]] = dirichlet_probs(alpha_s, len(strong_idx)-half, seed=seed+3)

return base

raise ValueError("scenario must be one of: uniform_noise, crowd_happy, mixed_signal")

Why we use a Dirichlet distribution to generate mock per-face emotion probabilities¶

In this notebook section, we do not yet have a trained face-emotion model, nor manual emotion labels for individual faces. However, we still want to validate and reason about the group emotion aggregation logic using real extracted faces.

To do this, we need synthetic per-face emotion predictions that behave like real model outputs. This is where the Dirichlet distribution is used.

1) What kind of data are we trying to mock?

A face emotion classifier typically outputs a probability vector:

$$ P_i = [P_i(\text{angry}), \dots, P_i(\text{neutral})] $$

with the following properties:

- all probabilities are non-negative

- probabilities sum to 1

- some predictions are uncertain (flat)

- some predictions are confident (peaked)

The mock generator must produce vectors with exactly these properties.

2) Why the Dirichlet distribution is appropriate

The Dirichlet distribution is the canonical distribution over the probability simplex. Sampling from a Dirichlet distribution produces vectors that:

- lie in ([0,1]^K)

- sum to 1

- resemble softmax outputs of a classifier

Formally:

$$ P_i \sim \text{Dirichlet}(\alpha) $$

where the vector $$\alpha = [\alpha_1, \dots, \alpha_K]$$ controls the shape of the distribution.

This makes Dirichlet an ideal choice for simulating classifier-like probability outputs in a principled way.

3) How the $\alpha$ parameters control prediction behavior

The concentration parameters $\alpha$ determine how “confident” or “uncertain” the generated probabilities are.

Uniform or uncertain predictions When all $\alpha_k$ are equal (e.g., $[1,1,\dots,1]$), the distribution is relatively flat:

- no emotion is consistently favored

- predictions are noisy and uninformative

This is used in the uniform_noise scenario to simulate the absence of a clear

group emotion.

Mildly structured but still noisy predictions

Using slightly larger and equal values (e.g., $[1.2,1.2,\dots]$) produces probabilities that are still random but less extreme.

This models the majority of faces in a group image, which often show ambiguous or weak emotion signals.

Strongly peaked predictions

If one component of $\alpha$ is much larger than the others, the generated probability vectors become highly concentrated on that class.

Example:

- large $\alpha$ for “happy”

- small $\alpha$ for all other emotions

This simulates confident model outputs for faces that clearly express a given emotion.

4) Why high-quality faces receive stronger mock signals